The Shift Is Already Happening

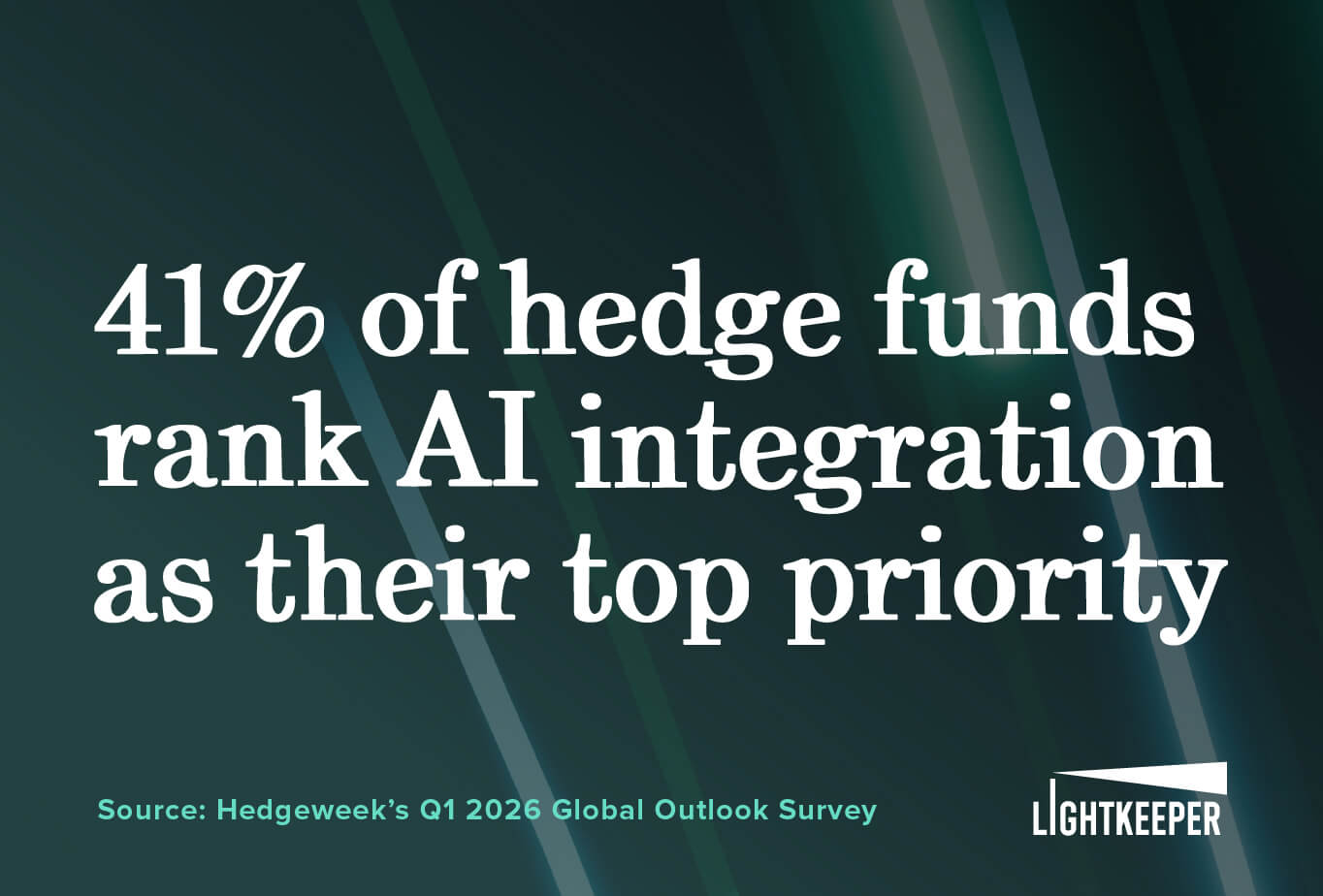

Agentic AI is no longer a concept that hedge funds are watching from a distance. It is arriving fast, and the gap between funds that are prepared and funds that are not is widening every quarter. Despite the growing attention surrounding autonomous agents, most firms are asking the wrong question. The challenge is not whether large language models are capable enough. It is whether the underlying investment infrastructure is ready to support them.

McKinsey data makes this concrete: while 62% of organizations are already experimenting with AI agents, fewer than 10% have successfully scaled them. That gap should give any fund considering an agentic deployment real pause. The primary obstacle is not model performance. It is legacy infrastructure that cannot support autonomous systems operating at speed. In institutional investing, an AI agent is only as effective as the data, controls, and workflows it operates within.

Traditional AI answers questions. Agentic AI takes action; planning tasks, executing them, and verifying results with minimal human input. And that distinction changes the risk vs reward equation entirely.

The Operational Problem Agentic AI Is Solving

To understand why agentic AI is gaining traction so quickly, you must understand what it is replacing.

Many hedge fund operations still rely on fragmented workflows built around spreadsheets, siloed systems, and manual processes that were never designed for systematic automation. Analysts spend significant time gathering, validating, and formatting data rather than generating alpha. Compliance teams run periodic manual reviews rather than continuous monitoring. Investor relations teams spend days on requests that AI-driven workflows may eventually address in minutes.

What many firms are discovering is that AI initiatives expose operational weaknesses that already existed beneath the surface. Portfolio, accounting, and risk data often live across disconnected systems with different structures, definitions, and update cycles. Humans can work around those inconsistencies manually. Autonomous systems cannot.

According to Gartner, 52% of organizations cite data quality as the single biggest blocker to deploying agentic AI. That figure is probably higher in investment management, where data flows across dozens of counterparties, each with its own formats, timing, and definitions. The fragmentation is deep, and it predates AI by decades.

What AI Agents Actually Require in Production Environments

Most conversations about agentic AI focus on the model, the interface, or the orchestration framework. The more immediate question is what the agent actually operates on.

In a hedge fund environment, agents need to query positions, compare exposures to limits, reconcile activity across disconnected PMS and OMS platforms, and produce outputs that compliance teams can stand behind. That means three things have to be true before any of this works: the agent needs a reasoning layer that can interpret financial context, it needs access to data it can trust, and every action needs to leave an auditable trail.

The clearest use cases fall across two domains. On the middle and back-office side: reconciling trades across prime broker feeds, flagging compliance breaches in real time, automating audit trails, and maintaining continuous operational surveillance. On the front-office side: synthesizing earnings calls and filings, monitoring intraday risk exposures, generating attribution summaries, and responding to LP queries at a speed and consistency no human team can sustain.

That architecture only works if the underlying data is clean, standardized, and connected. If it is not, agents do not simply underperform; they scale operational misinformation at machine speed.

Why Infrastructure Readiness Matters More Than You Think

Traditional AI tools fail silently. Agentic AI fails actively. A wrong answer in a chat window is unfortunate but contained. When an autonomous system makes a bad decision, it could trigger downstream actions, initiate workflows, and compound errors across connected systems before anyone has a chance to review anything.

Consider what this looks like in practice. An agent monitoring portfolio exposure pulls position data from two systems running on different update cycles, one refreshing intraday, another delayed by overnight batch processing. The agent reads the inconsistency as a genuine concentration breach and automatically escalates a compliance alert. The operations team scrambles to investigate. The alert turns out to be a data timing issue, not an actual breach. But the workflow has already been initiated; the compliance log has an entry that requires a written response, and the portfolio manager lost an hour to an escalation that should never have happened. Now run that scenario across a dozen agents operating simultaneously.

Funds that have already invested in data normalization, cross-system integration, governance controls, and audit-ready reporting pipelines are in a far stronger position to deploy agentic AI responsibly. Those without that foundation will struggle to move beyond experimentation or find themselves managing operational and regulatory exposure that compounds as automation scales.

The Upside of Getting it Right

For all the complexity of getting there, the upside is worth stating plainly. The single biggest constraint in investment management has never been a shortage of data. It has been the time and resources necessary to turn data into actionable ideas. Time to analyze every position, synthesize every report, stress test every assumption. Agentic AI will not change what good investing looks like, but it will change the multiplier on how much a team can accomplish. A fund that once had bandwidth to run analyst reviews annually can now run them quarterly, and the deep dive on trade efficiency that was back-burnered can run every week. For firms with the infrastructure to support it, that is not an incremental gain. It is a new level of what a lean, high-conviction team can accomplish.

Where Lightkeeper Fits In

At Lightkeeper, we are actively evaluating how agentic AI will fit into the future of investment management workflows and infrastructure. We are approaching the development of agentic capabilities deliberately because we believe these systems only become valuable when the operational foundation underneath them is reliable.

Over the past 15 years, we have focused on solving many of the same challenges that autonomous systems now expose: fragmented investment data, disconnected workflows, inconsistent calculations, and the need for auditability across complex environments. Performance analytics, attribution, risk, and reporting all depend on clean, connected, and trusted data flowing consistently across systems.

That foundation matters because agentic AI does not operate in isolation. If those environments are fragmented or inconsistent, automation does not remove operational risk. It amplifies it.

We believe firms that have already invested in data normalization, governance, integration, and trusted reporting infrastructure will be in a much stronger position to adopt agentic workflows responsibly as the technology matures. In many ways, the operational discipline required for institutional investing is becoming the same discipline required for effective AI adoption.

As the industry moves forward, our focus remains the same as it has always been: helping investment teams operate with greater clarity, consistency, and confidence in the data underlying their decisions.

Human Judgment Remains the Constant

The goal of agentic AI in investment management is not to remove humans from the process. It is to remove the friction that prevents humans from doing their highest-value work.

Effective implementations will streamline reporting, compliance checks, and operational coordination while preserving human oversight where judgment and accountability matter most. Approval gates, review checkpoints, and robust audit trails are not optional. In institutional finance, trust and explainability are table stakes.

This is how Lightkeeper thinks about technology broadly. The goal is to give portfolio managers, analysts, and operations teams better information, faster, with greater confidence in the underlying data. Whatever agentic capabilities emerge across the industry, the firms that succeed will be the ones that treat automation and human judgment as complementary, not competing.

What to Do Now

The firms that benefit most from agentic AI will not be the ones who move fastest to deploy an agent framework. They will be the ones who did the harder foundational work first; integrating their systems, normalizing data across prime brokers and custodians, establishing governance controls, and building reporting infrastructure that every function of the firm can trust.

Put simply: if those foundations are in place, agentic AI becomes a meaningful accelerant. Without them, it becomes a liability.

The question worth asking today is not whether your fund should be thinking about agentic AI. It should be. The real question is whether your data infrastructure is ready to support it. Because the model is the easy part. The data always was, and remains, the hard part.

As agentic systems move into production environments, operational infrastructure will become a competitive differentiator rather than a back-office concern.

Sources

- McKinsey & Company, AI adoption and scaling research (2025/2026)

- Gartner, enterprise agentic AI adoption and deployment research (2025/2026)